Research at MSP

This website summarizes the research activities at the

MSP lab. Please refer to the publication section for further details.

Our long-term goal is to understand, under a multimodal signal processing view, inter-personal expressive human communication and interaction. In addition to spoken language, non-verbal cues such as hand gestures, facial expressions, rigid head motions, and speech prosody (e.g., pitch and energy) play a crucial role to express feeling, to give feedback and to display human affective states or emotions. Development of multimedia speech communication interfaces that are socially aware, in both recognizing and responding to the human users, hence has to incorporate the rich fabric of verbal and nonverbal cues, expressed both auditorily and visually. This is the precise goal of our research. Such core enabling multimedia technology can have impact in a wide range of applications including games, simulation systems and other education and entertainment systems.

There are four main research areas of interest:

- Affective state recognition

- Modeling and synthesis of human behavior

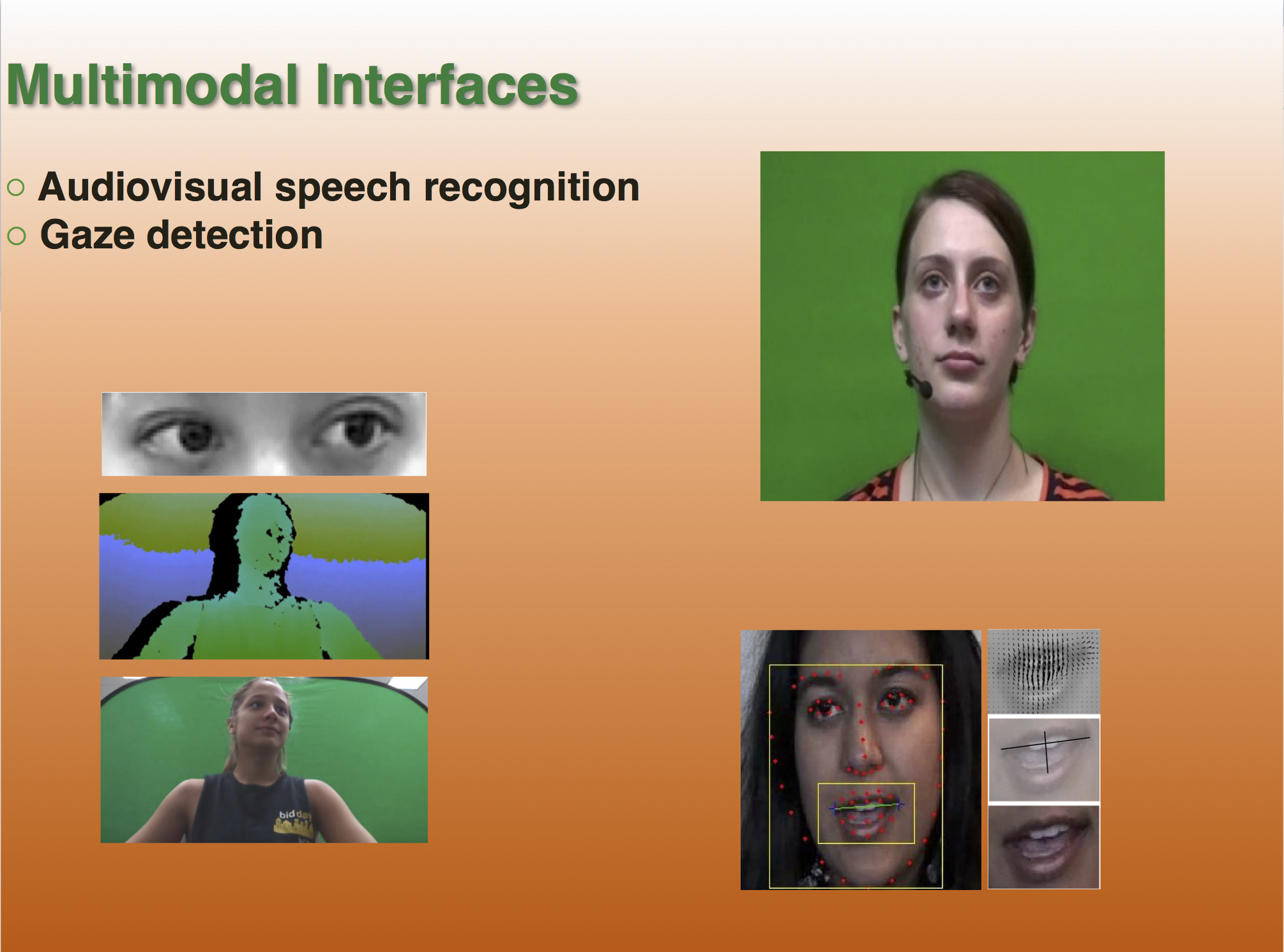

- Multimodal interfaces

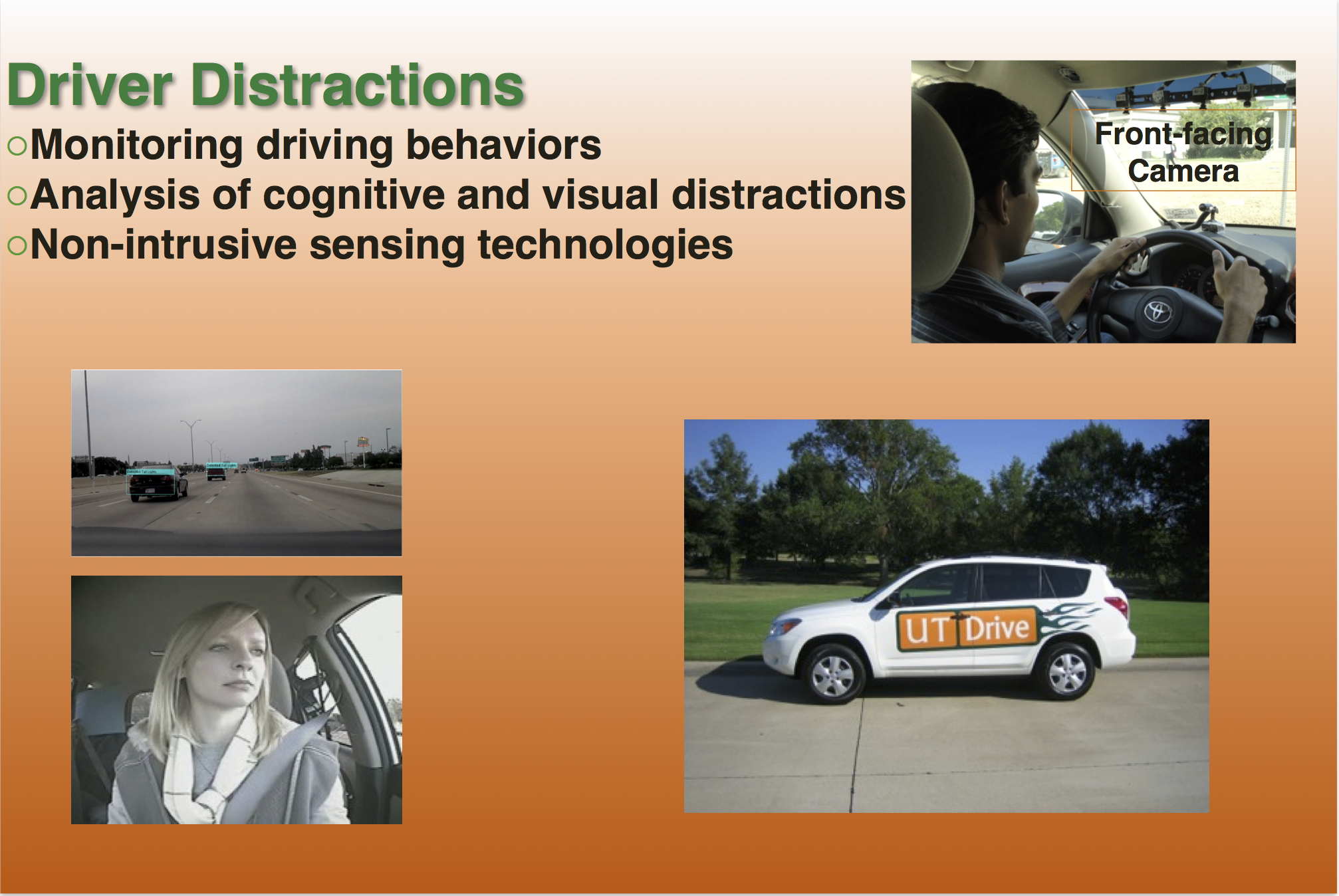

- Monitoring driver distraction

|

|

|

|